Okay, stop everything. Let's get real here: Anthropic just made a rookie mistake and now we all know what they were hiding in their labs. And trust me, this isn't small stuff.

Here's the simple version: a Fortune journalist gets a tip from two security researchers. They poke around Anthropic's CMS — the system they use to manage content — and what do they find? Everything public. Literally. Thousands of files, drafts, internal documents, exposed because someone left the setting on "public" instead of "private."

Ah ah, here comes the AI, right? The company teaching us how to use artificial intelligence safely can't even configure their own content management system properly. The irony is so heavy it makes noise.

The Monster they built

But let's get to the meat of it. Among these files was the description of Claude Mythos — or Capybara, as they call it internally. And folks, this is a total step change.

Let me give you a practical example: Claude Opus 4.6, already Anthropic's strongest model, beats everyone in coding tests. Now imagine something that "dramatically exceeds" Opus not in one, but three areas: programming, academic reasoning, and — pay attention here — cybersecurity.

Here's the sentence that gives you chills, straight from the document we were never supposed to read: "Currently far ahead of any other AI model in cyber capabilities."

But it doesn't end there. They add: "Foreshadows an imminent wave of models capable of exploiting vulnerabilities in ways that far outpace defenders' efforts."

Translation: we're about to enter a world where AI finds holes in your company faster than you can patch them. And Anthropic knows it. Actually, Anthropic is scared of it.

The fear is written between the lines

Look at what they say in the same leaked draft: "As we prepare to release Claude Capybara, we want to exercise extra caution and understand the risks it poses — even beyond what we learn in our testing."

Translation from corporate speak to plain English: "This stuff is dangerous and we're not entirely sure how much."

So what do they do? They don't release it to everyone. They only give it to cyber defense organizations. Groups that should use it to protect themselves, not to attack.

But here's the problem, and please follow me because this matters: in November 2025, Chinese state-sponsored groups managed to use Claude Code — Anthropic's coding tool — to infiltrate 30 global organizations.

Thirty. Banks. Tech companies. Government agencies. And Anthropic only found out after the fact. So when they say they want to be cautious with Mythos... well, the precedent isn't reassuring.

The market got it immediately

And you know who understood the gravity of the situation immediately? The markets.

Friday, when the news broke, shares of Palo Alto Networks, CrowdStrike, and Fortinet crashed 4-6%.

Why? Simple: if an AI exists that can find and exploit vulnerabilities better than any human team, the entire traditional cybersecurity sector becomes... obsolete? Useless? Or at least in serious trouble?

Two Wall Street analysts tried to reassure everyone by saying we need "more cybersecurity, not less." But excuse me, if the attack is completely automated and scales infinitely, how many human experts do we need to counter it? One hundred thousand? One million? And where do we find them?

The race with OpenAI: whoever wins, loses?

And there's another story you need to know. While Anthropic was trying to keep Mythos secret, OpenAI completed training on their frontier model, codenamed "Spud." Two days before the leak.

Sam Altman reportedly said internally that this system can "accelerate the entire economy."

Both companies want to IPO in 2026. Both want to prove they have the strongest model. It's a race, and in a race you run fast. Maybe too fast.

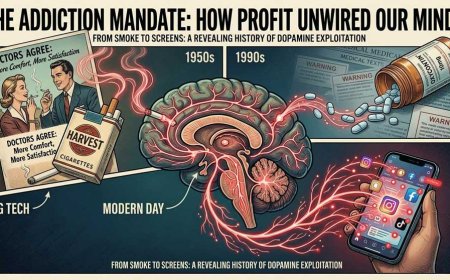

Because here's the point: none of us voted for this. Nobody decided we want AI capable of breaking through any cyber defense. Nobody discussed whether we're ready for a world where attack is automatic and defense is... what exactly?

The CEO retreat: the Elite deciding for all of us

And you know what was in those leaked files? Details of a two-day exclusive retreat for European CEOs at an 18th-century English country estate.

Dario Amodei, Anthropic's CEO, will present there "Claude capabilities not yet released." An "intimate" event for "deliberative conversations."

Translation: the super-rich and super-powerful will see in preview what the future holds for us, while we common mortals wait and hope everything doesn't blow up.

I don't know about you, but this doesn't sit right with me. We talk about democratizing AI, access for everyone, and then the real decisions get made in an English castle between champagne and budget approvals?

Pros and Cons: Let's do the math

✅ The Upside — If We survive to see It

-

Revolutionary coding: software written by AI that actually understands what it's doing, not just fumbling and hoping

-

Proactive defense: finding the holes before the bad guys do, for once

-

Intelligent automation: complex workflows handled autonomously, not just single stupid tasks anymore

⚠️ The Dark Side — And it's heavy

-

Automated cyberwarfare: rogue states and criminals with cyber weapons that never sleep

-

Asymmetric speed: attack is instant, defense requires human time

-

The Chinese precedent: if they already abused Claude Code, what will they do with Mythos?

-

The CMS error: if they can't protect their own files, how will they protect the most dangerous model they ever built?

The Truth that scares Us

Let me tell you what I think, and maybe I'm wrong, but this needs to be said: Anthropic is afraid of its own creation. You read it between the lines of their documents. You understand it from their excessive caution in releasing. You sense it from the fact that they're prioritizing "defenders" — as if they're trying to fix things before it's too late.

But the question is: is it already too late?

When a model like this exists, even if "secret," even if "limited," the technology is out of the bottle. Sooner or later someone will replicate it. Sooner or later someone will use it for malicious purposes. Sooner or later — and here's the kicker — there will be no more difference between attack and defense, because both will be AI versus AI, and we humans will just be spectators.

Who controls Whom?

Let's close with a reflection. Anthropic was born from ex-OpenAI employees who wanted to do things "the right way," with more safety, more ethics, more attention to risks.

Yet here they are, with a model they describe as "the most capable ever built" and simultaneously describe as an unprecedented risk to global cybersecurity.

The CMS error is symbolic: even those who should control AI lose control. And if they lose control of files, what happens when they lose control of the models?

We'll continue to watch, to hope the "good guys" beat the "bad guys," to pretend this technology is neutral. But it's not. It's power. And power, as we know, corrupts.

Mythos is out. Or rather, it was out for a while, before we noticed. The question isn't if it will change everything, but when. And whether we'll be ready.